“Multi-cloud” means multiple public clouds. A company that uses a multi-cloud deployment incorporates multiple public clouds from more than one cloud provider. Instead of a business using one vendor for cloud hosting, storage, and the full application stack, in a multi-cloud configuration they use several¹ .

Multi-cloud deployments have several advantages. A multi-cloud deployment can leverage multiple IaaS (Infrastructure-as-a-Service) vendors, or it could use a different vendor for IaaS, PaaS (Platform-as-a-Service), and SaaS (Software-as-a-Service) services. Multi-cloud can be purely for the purpose of redundancy and system backup, or it can incorporate different cloud vendors for different services¹. Also it allows higher flexibility in terms of cost reduction and efficiency.

In the last few years, more and more cloud providers have started offering also FPGAs as a computing platform. AWS and Azure are among the cloud providers that offer FPGAs as a computing platform. However, in the domain of FPGAs, multi-cloud deployment is still very challenging.

Before deploying your application on the FPGA, the FPGA must be programmed with the configuration file that includes the custom architecture for the application you try to accelerate. The configuration file (called bitstream) is the equivalent to the binary file on the CPU world. Unfortunately the configuration file is different for each FPGA vendor and even worse its different for different FPGA cards. (Imagine if you had to have different binary files for different kind of x86 processor (i.e. i3, i5, i7, etc.) and you had to keep an artifact repository for each x86 processor). To make things worse, in many cases the program that invoke the FPGA accelerator need to include also the information about the configuration file. For example, the source code that runs on the CPU must include the information on where to find the configuration file to program the FPGA before invoking the function that you want to accelerate.

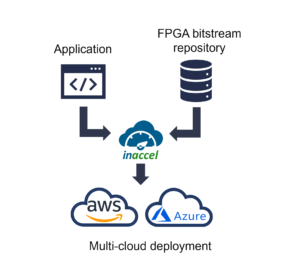

As you may understand all these challenges make much harder the deployment of FPGAs on the cloud and even more challenging the multi-cloud deployment. To solve this problem we had to develop a new way of thinking to make thing much simpler. The first step was the development of an FPGA repository that allows to story several configuration files for different kind of FPGA cards and different kind of FPGA vendors (or clouds). For example, the FPGA developer can implement the accelerator for compression and then he/she can upload the bitstream files for Intel, Xilinx or Achronix FPGAs to the repository.

The second step was the development of an FPGA resource manager that abstracts away the FPGA resources makes much easier the resource management, sharing and scaling of FPGA application. The Coral FPGA resource manager in collaboration with the FPGA repository decouples the software developer from the FPGA developer. The main advantage of this approach is that it allows instant multi-cloud FPGA deployment as the same application can be deployed on several node on the clouds and the FPGA artifact manager will be used to configure the FPGA with the right bitstream file.

For example, project MOPRHEMIC allows proactive adaptation on a multi-cloud environment. Proactive adaptation is not only based on the current execution context and conditions but aims to forecast future resource needs and possible deployment configurations. MORPHEMIC also supports acceleration of the applications using FPGAs. To this end the InAccel Coral FPGA resource manager has been developed to allow multi-cloud deployment in the most efficient way. Users can now speedup their applications using FPGAs on any cloud that meets the application requirements without having to be locked-in on specific FPGA or cloud vendors.