What is Homomorphic Encryption and why FPGAs are the ideal platform to speedup HE

FPGAs are an ideal platform for homomorphic encryption due to their ability to parallelize computations and their power-efficiency. They offer a powerful and efficient way to perform the complex mathematical operations required for homomorphic encryption, making them a key technology for secure data processing.

Read moreInAccel announces new partnership with Watad, the premier AI Solution Provider company in Saudi Arabia and UAE

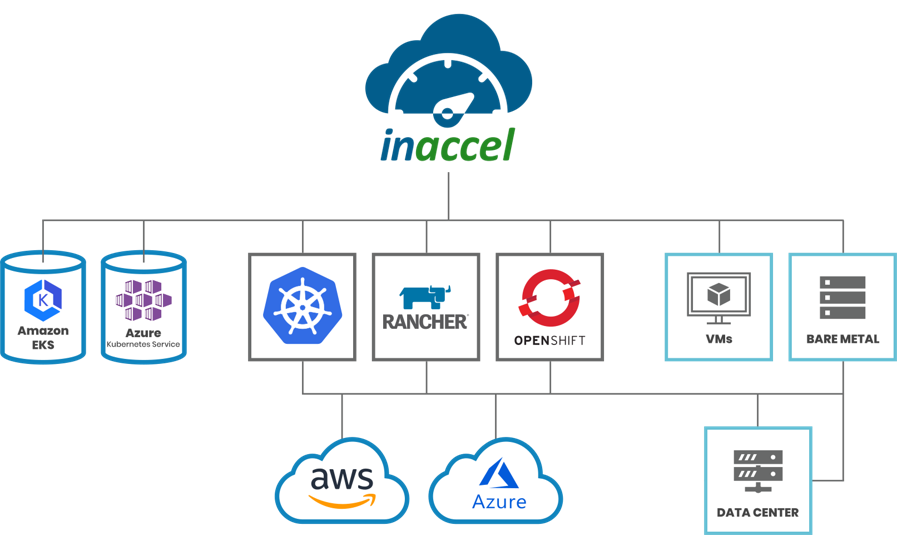

InAccel, the leading provider of cloud native deployment technology for FPGAs, is excited to announce a partnership with Watad, the premier AI Solution Provider company in Saudi Arabia and UAE. Through this partnership, Watad will be able to leverage InAccel's innovative technology to easily deploy, scale, and manage FPGA clusters in the cloud, providing their clients with the best possible FPGA deployment in the Middle East. InAccel's unique technology streamlines the process of deploying FPGAs in the cloud, making it more accessible and cost-effective for businesses of all sizes. With InAccel, Watad can confidently offer their clients the most advanced and reliable FPGA deployment solutions in the region.

Read more

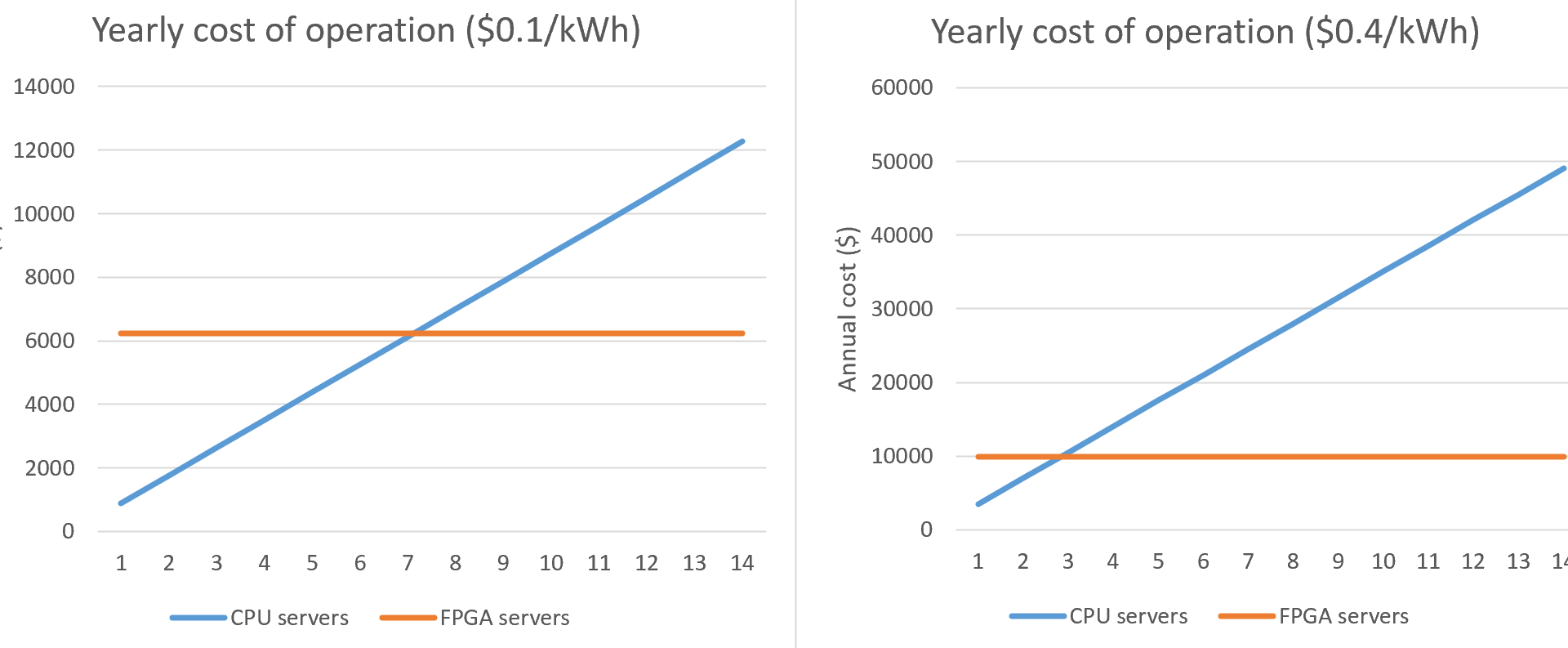

How to reduce data center's OpEx during the energy crisis

FPGA-based servers can provide up to 5x lower OpEx compared to typical servers based on processors. Especially now that the energy cost in increasing significantly it makes more sense to migrate your workloads to FPGA-based servers.

Read more

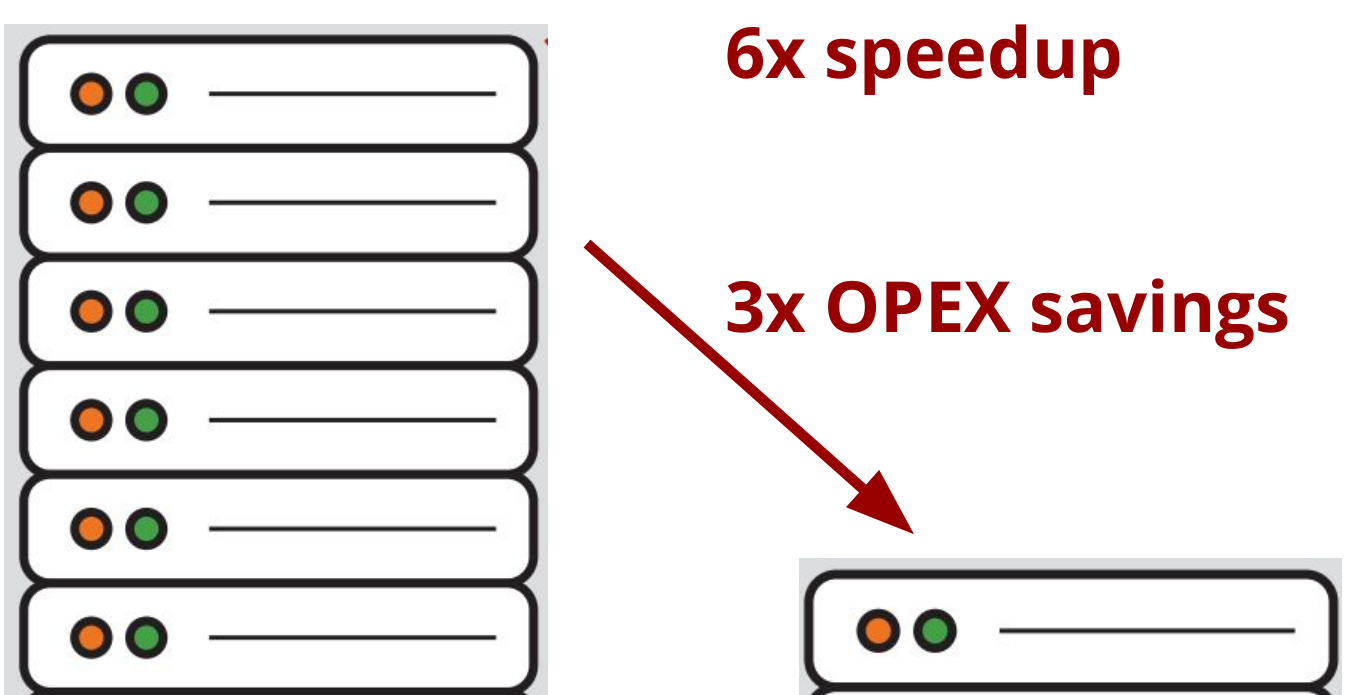

FPGAs can be used not only to speedup the ZKP applications but also to reduce by more than 3x the TCO on cloud or on-premise deployment

Read more

World’s first end-to-end integration of ZKP with FPGAs.

Today InAccel released the first end-to-end integration of the ZKP with FPGAs. InAccel released a web-based playground that allow anyone to execute ZKP circuits on top of FPGAs based on Zokrates and Circom. Users can test it on https://play.zkaccel.io/ . The playground is currently using an open-source FPGA accelerator with limited performance but it can support any other FPGA accelerator.

Read more

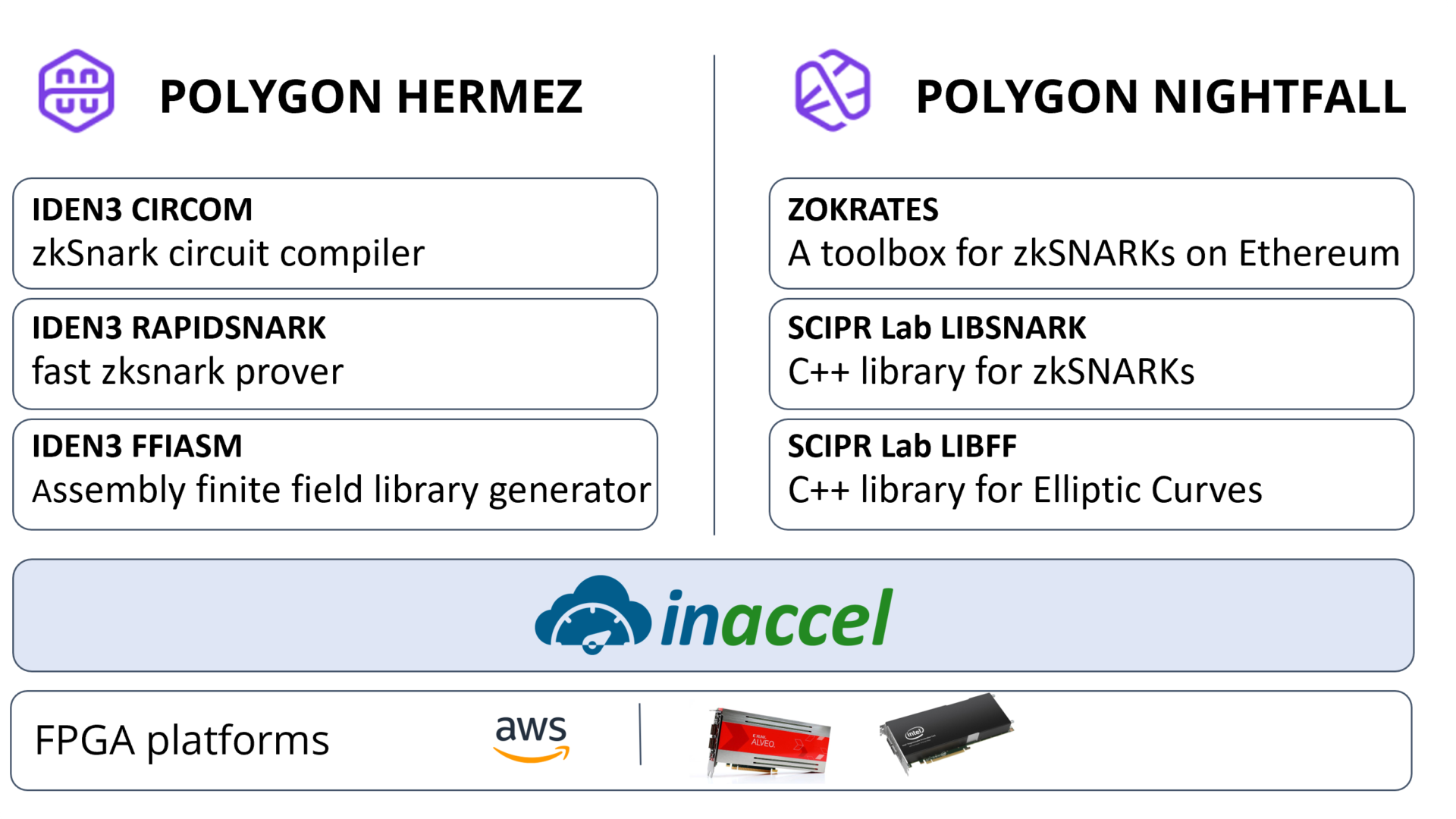

Is ZKP the next Killer FPGA application?

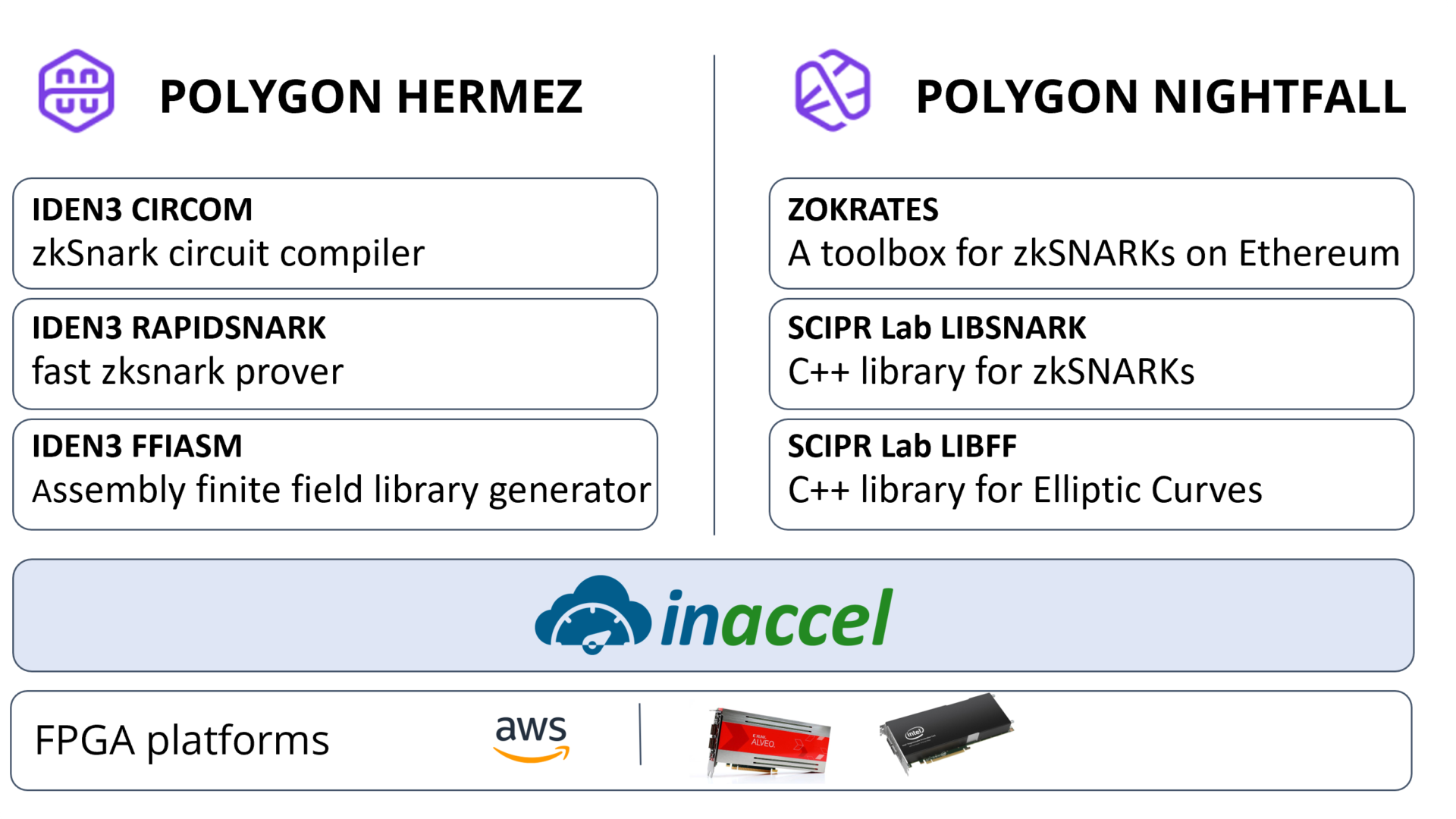

Using the InAccel software stack (resource management and accelerator orchestration) we enabled the fast and efficient integration of FPGA provers with two state-of-the-art ZK circuit compilers, namely Circom and ZoKrates.

Read more

How FPGAs can be deployed in data centers efficiently

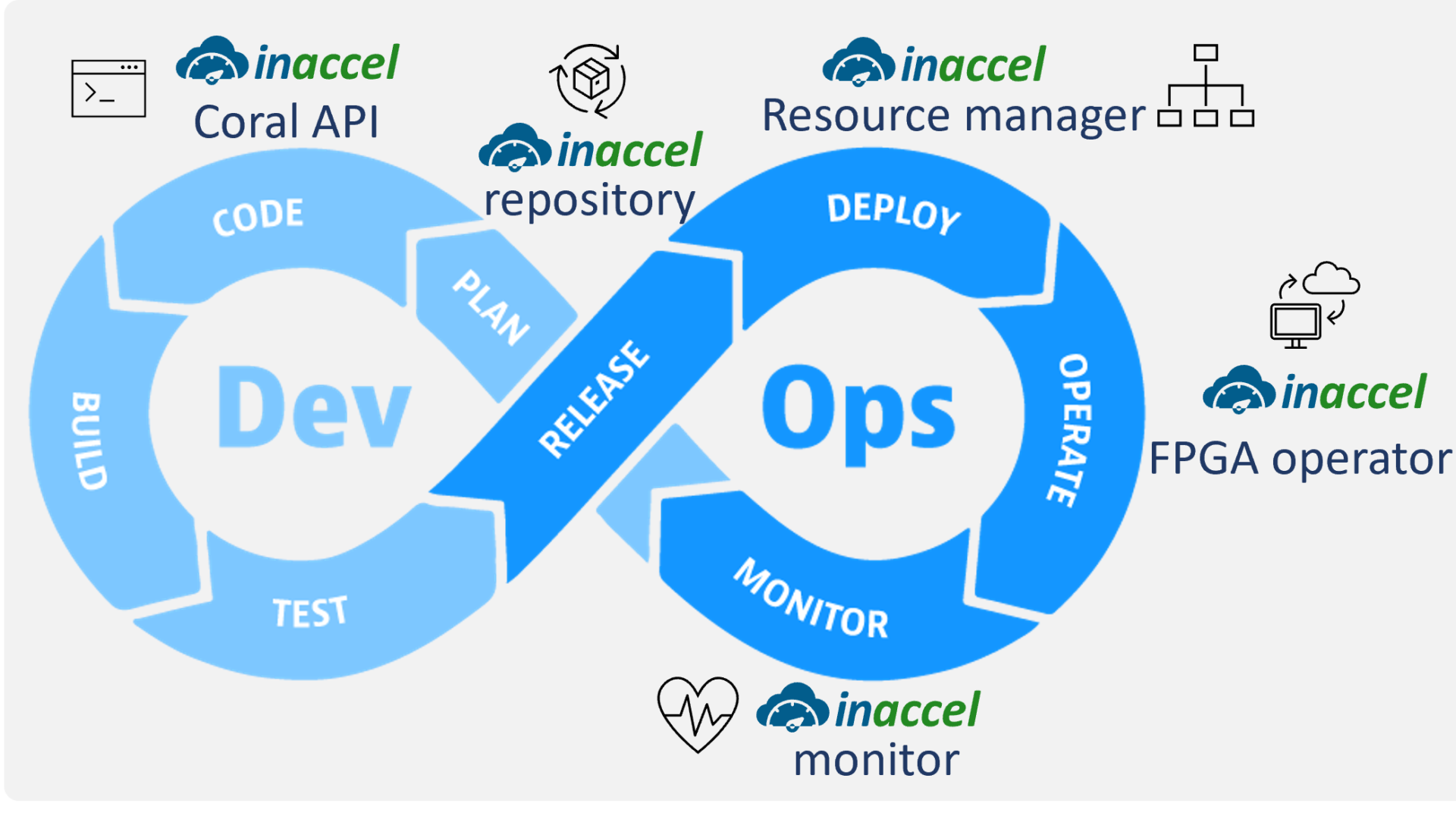

The FPGA operator allows cluster admins to manage their remote FPGA-powered servers the same way they manage CPU-based systems, but also regular users to target particular FPGA types and explicitly consume FPGA resources in their workloads. This makes it easy to bring up a fleet of remote systems and run accelerated applications without additional technical expertise on the ground.

Read more

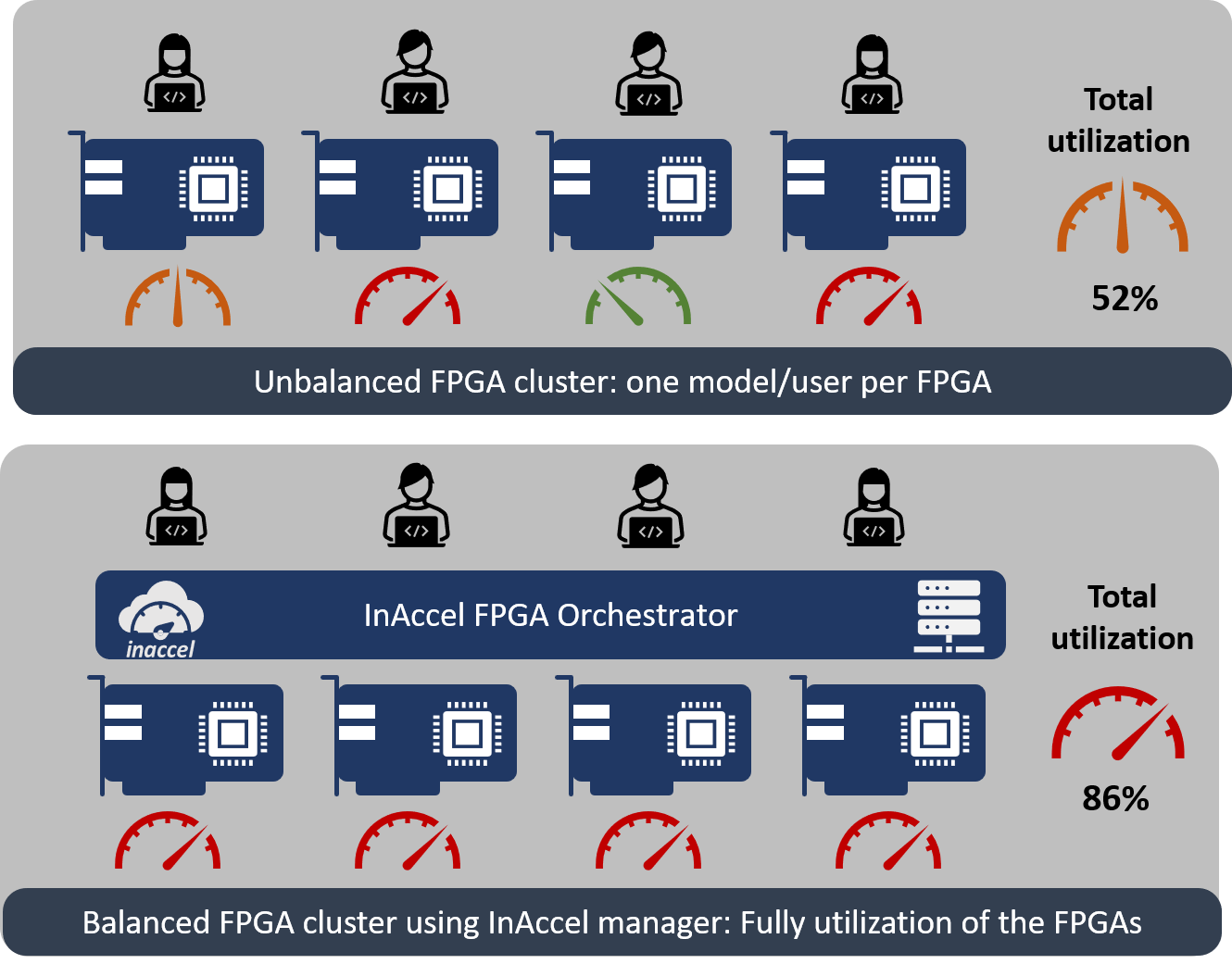

How better FPGA resource utilization can save you time and money

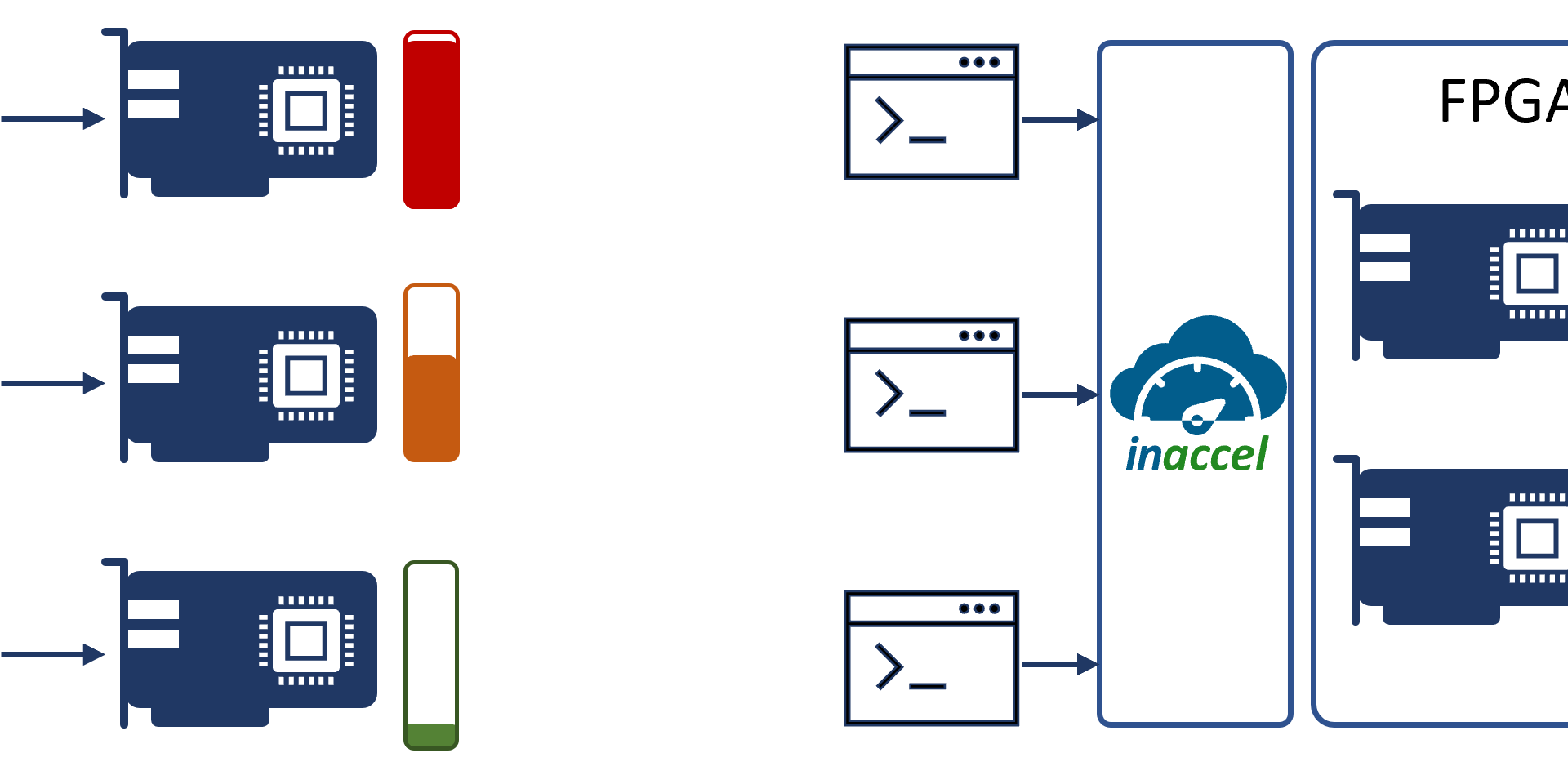

Hardware accelerators, like GPUs and FPGAs, offer much higher computing power compared to typical processors. This is the reason that more and more cloud providers offer FPGAs and GPUs as computing resources. However, they are among the most expensive devices in a datacenter, thus being very important to make sure that all of the resources in a system are being shared and as fully utilized as possible

Read more

How Machine Learning can help reduce the road accidents

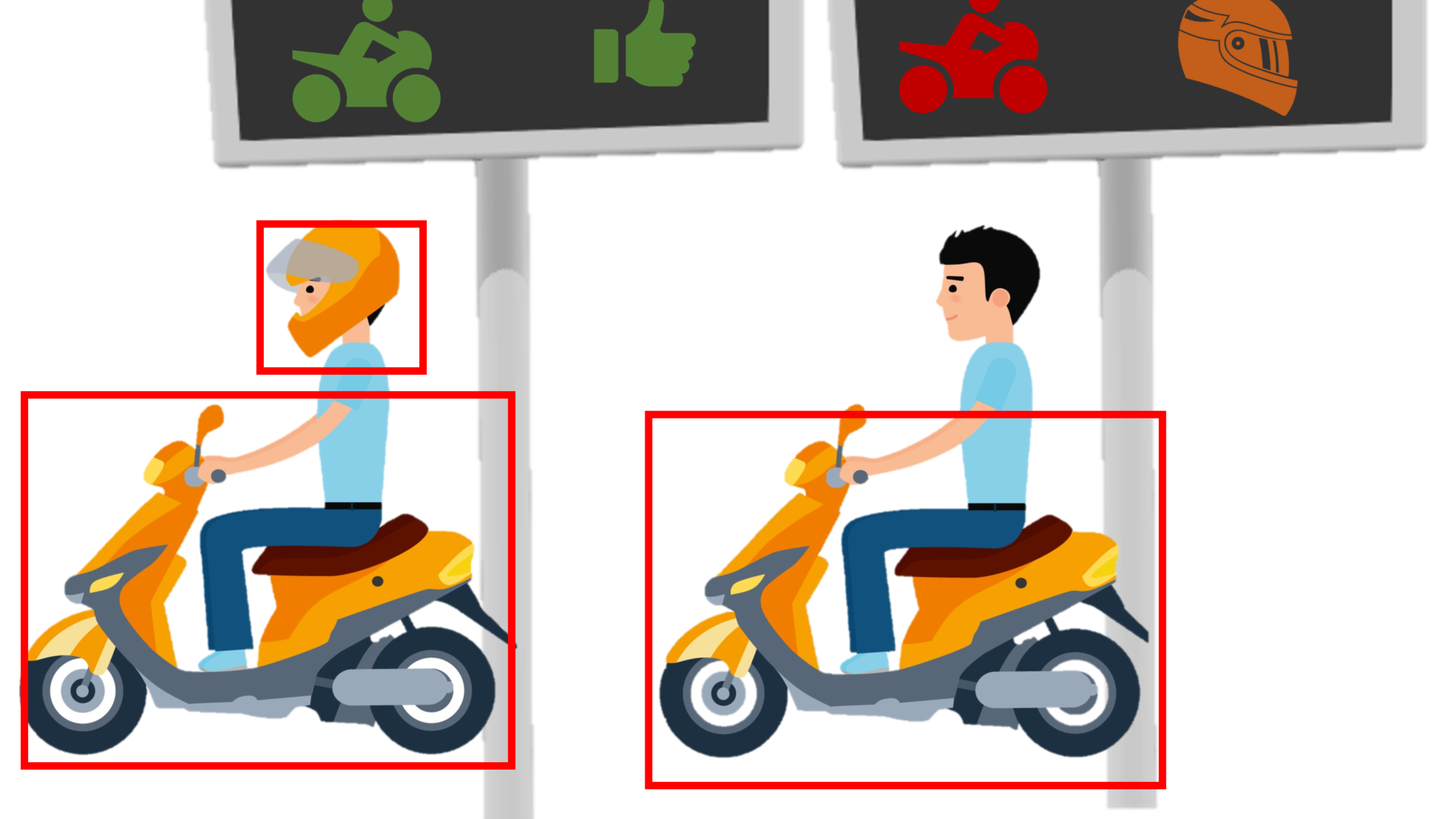

SafeDisplay automatically recognize if motorbike riders wear helmets and display the information on the panel to encourage the use of helmets.

Read more

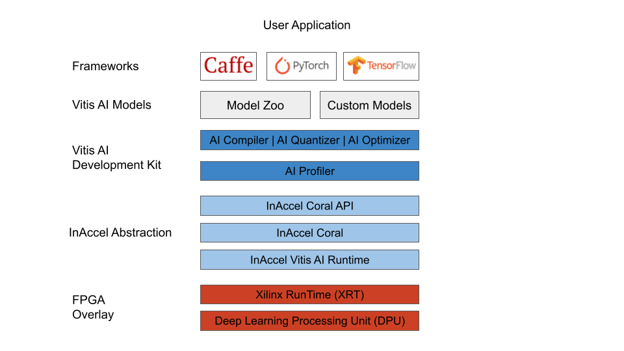

Easy deployment and scaling of Vitis AI accelerators using InAccel

To ease the deployment, scaling, and management of Vitis AI applications, InAccel develops the InAccel Vitis AI runtime, which is an abstraction layer, that hides the integration complexity from the software developers and ML engineers, but also simplifies the deployment and maintenance of FPGA-powered AI services. It also offers FPGA provisioning and auto-scaling capabilities that can meet every production-grade requirement.

Read more

The main advantage of this approach is that it allows instant multi-cloud FPGA deployment as the same application can be deployed on several node on the clouds and the FPGA artifact manager will be used to configure the FPGA with the right bitstream file.

Read more

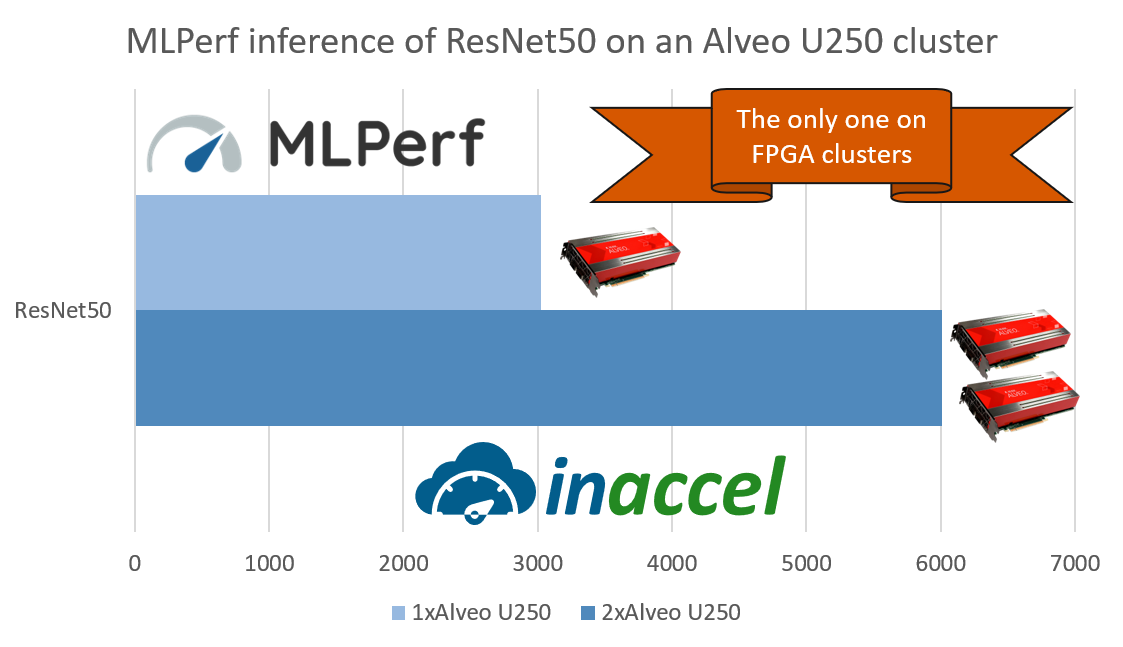

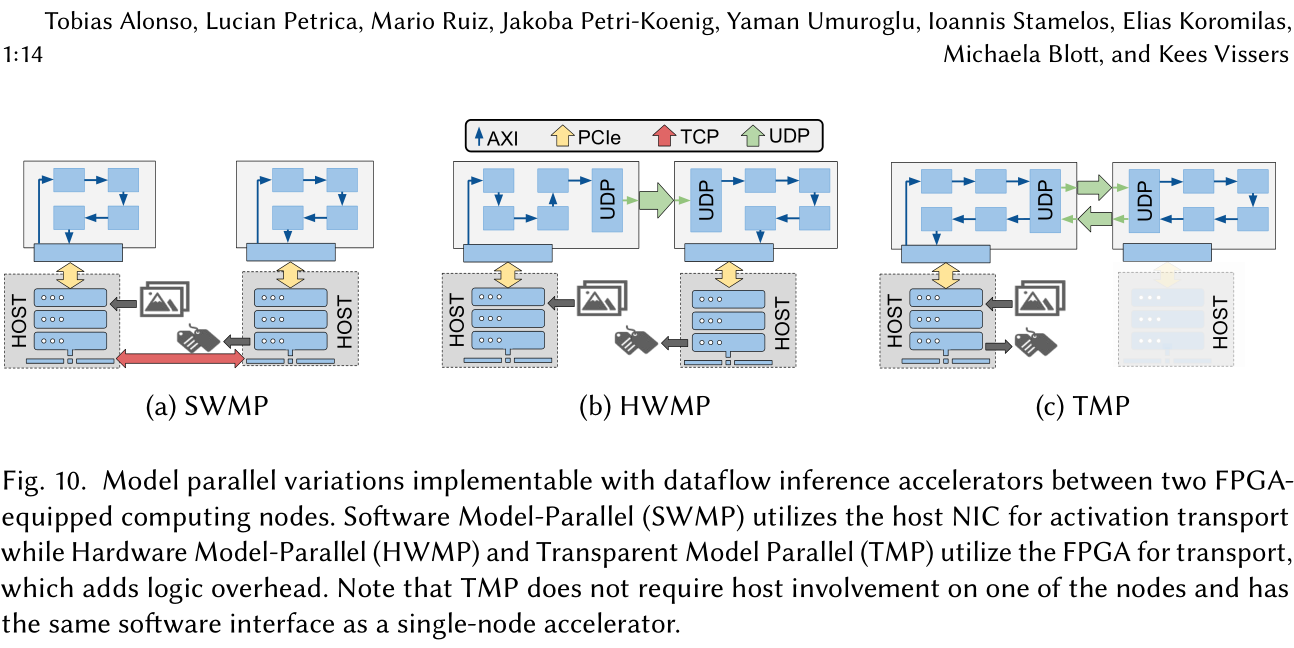

Scaling Performance of DNN Inference in FPGA clusters using InAccel orchestrator

How Xilinx used InAccel Coral for the evaluation of Multi-FPGA deployment for DNN. The MLPerf evaluation on multi-FPGA accelerators has been done using the InAccel Coral orchestrator and resource manager.

Read more

GPUs vs FPGAs: Which one is better in DL and Data Centers applications

However there are several cases in the domain of deep learning that GPUs are considered more powerful than FPGAs. Then, why AMD decided to acquire Xilinx for $35 billion instead of further advancing its own GPUs?

Read more

Simplify AI Deployment at scale on FPGA clusters

InAccel provides a unique FPGA cluster manager that allows easy of deployment and scaling at production level for AI and ML applications. The FPGA manager can be used both for inference and training of ML models.

Read more

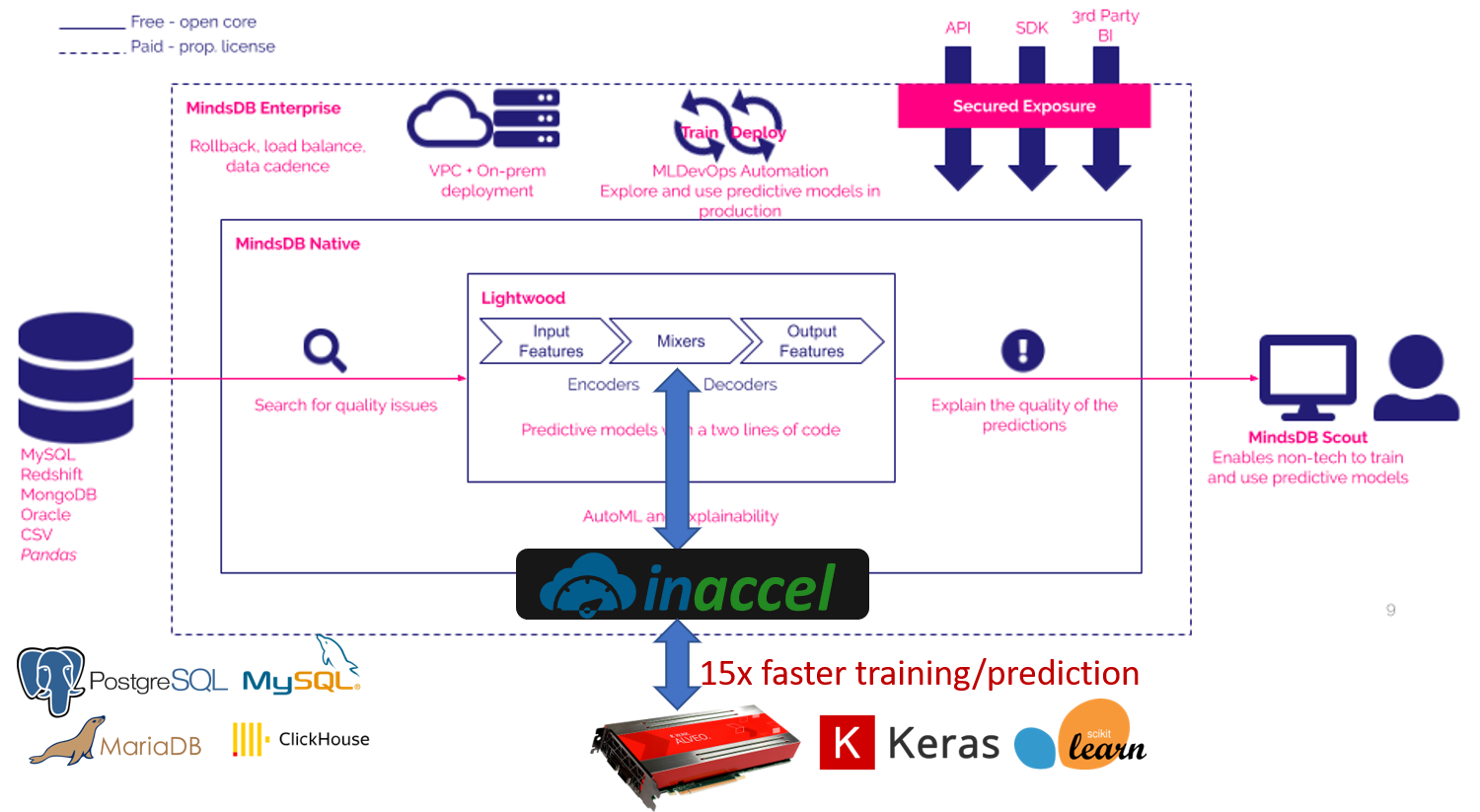

FPGA-accelerated ML on MindsDB's Lightwood

InAccel provides the Logistic Regression accelerator which can accelerate the training time of a machine learning model and it is available for Intel's Arria10 FPGAs, Amazon EC2 F1 instances and Xilinx's Alveo U200, U250 and U280 FPGA platforms. So we developed a LogisticRegression class that can be selected as the desired mixer to create the Lightwood Predictor.

Read more

What is missing for the success of the AI HW chips

The latest version of the Coral resource manager allows the software community to instantiate and utilize a cluster of AI hardware accelerators with the same easy as invoking typical software functions. InAccel’s Coral resource manager allows multiple applications to share and utilize a cluster of accelerators in the same node (server) without worrying about the scheduling, load balancing and the resource management of each accelerator.

Read moreInaccel announces record-breaking speed on facial detection test using an FPGA cluster

InAccel, a world-pioneer in the domain of FPGA-based accelerators, today released the results of a test in which a cluster of eight FPGAs in a single server were used to achieve 1266 fps in a facial detection speed test.

Read more

Accelerated Face detection on a cluster of 8 Alveo FPGA cards

InAccel has released today an integrated framework that allows to utilize the power of an FPGA cluster for face detection. Specifically, InAccel has presented a demo in which a cluster of 8 FPGAs are used to provide up to 1700 fps (supporting up to 56 cameras with 30 fps in a single server).

Read more