What is InAccel?

InAccel offers 1-click deployment of FPGA workloads on premises, on cloud, using Docker, Podman, Singularity or Kubernetes. By taking care of the whole FPGA acceleration lifecycle we make it possible for you to focus on the application. The limit is your imagination!

Easy Deployment

Automatic Scaling

Resource Orchestration

Resource Monitoring

Faster Execution

Cost Reduction

Trusted By

Products

InAccel started as an IP core production company and later on transformed to the company it is today to help the adoption of FPGAs. InAccel still develops IP cores for customers and has a pool of available IP cores mainly targeting the domain of Machine Learning.

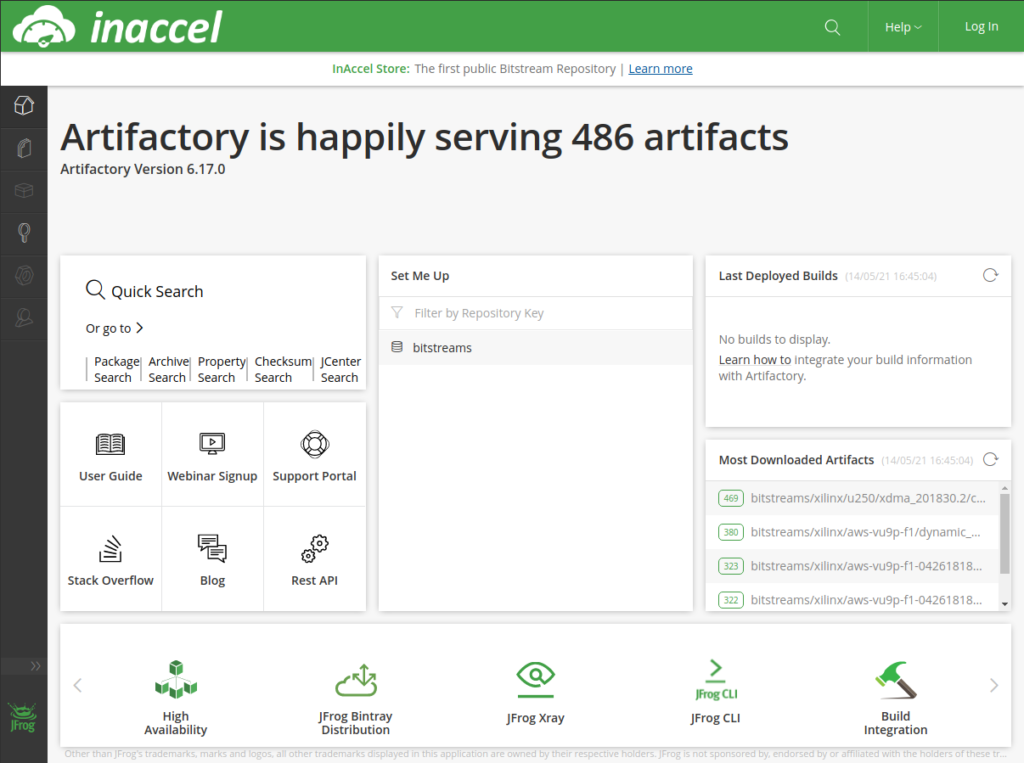

InAccel offers an end-to-end Bitstream repository solution covering the deployment lifecycle of your FPGA binaries to manage target platforms, allow artifact versioning and accelerator distribution.

Coral is a framework that registers possible the distributed acceleration of large data sets across clusters of FPGA resources using simple programming models. It abstracts away the FPGA resources to a pool of accelerators and takes care of scheduling and orchestrating jobs for acceleration.

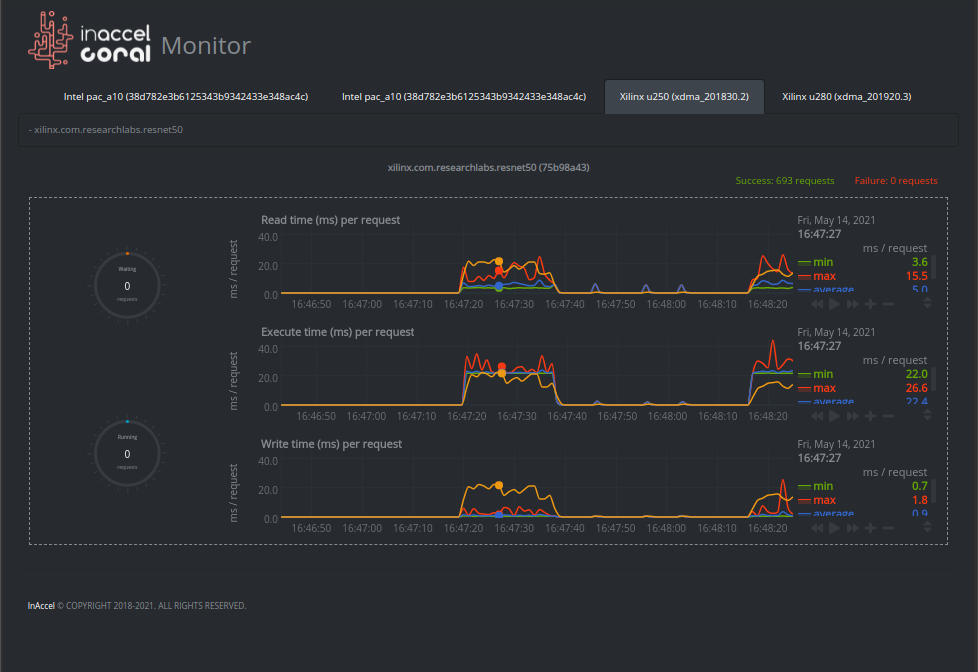

Coral monitor is a real-time monitoring tool designed specifically for custom resources like FPGAs and GPUs. It can provide power, thermal and structural information as well as details for all the running tasks.

Use Cases

InAccel provides all the tools required to get you started with hardware acceleration. It brings together version control of bitsteam artifacts, resource orchestration and monitoring so that users can benefit from the power of hardware accelerators whilst building scalable pipelines on environments that they are already familiar with, to provide production level real-life applications. For any computationally intensive application , our products can be used to reduce the execution time and reduce the energy footprint.

Below you can have a quick look on ready-to-use demos for a wide range of use cases:

Below you can have a quick look on ready-to-use demos for a wide range of use cases: